Public Opinion

Overview

Public Opinion

Introduction

Introduction

The collection of public opinion through polling and interviews is a part of political culture. Politicians want to know what the public thinks. Campaign managers want to know how citizens will vote. Media members seek to write stories about what the public wants. Every day, polls take the pulse of the people and report the results. And yet we have to wonder: Why do we care what people think? This section explores that question.

Learning Objectives

By the end of this section, students will be able to:

- Define public opinion

- Explain how beliefs and ideology affect the formation of public opinion

- Explain how information about public opinion is gathered

- Identify common ways to measure and quantify public opinion

- Analyze polls to determine whether they accurately measure a population’s opinions

- Polling in Texas

By the end of this section, you will be able to:

- Define public opinion

- Explain how beliefs and ideology affect the formation of public opinion

- Explain how information about public opinion is gathered

- Identify common ways to measure and quantify public opinion

- Analyze polls to determine whether they accurately measure a population’s opinions

- Polling in Texas

What is Public Opinion?

What is Public Opinion?

Public opinion is a collection of popular views about something, perhaps a person, a local or national event, or a new idea. For example, each day, a number of polling companies call Americans at random to ask whether they approve or disapprove of the way the president is guiding the economy.[1]

When situations arise at the state level polling companies survey whether Texans support a particular policy. These individual opinions are collected together to be analyzed and interpreted for politicians and the media. The analysis examines how the public feels or thinks, so politicians can use the information to make decisions about their future legislative votes, campaign messages, or propaganda.

But where do people’s opinions come from? Most citizens base their political opinions on their beliefs[2] and their attitudes, both of which begin to form in childhood. Beliefs are closely held ideas that support our values and expectations about life and politics. For example, the idea that we are all entitled to equality, liberty, freedom, and privacy is a belief most people in Texas share. We may acquire this belief by growing up in Texas or by having come from a country that did not afford these valued principles to its citizens.

Our attitudes are also affected by our personal beliefs and represent the preferences we form based on our life experiences and values. A person who has suffered racism or bigotry may have a skeptical attitude toward the actions of authority figures, for example.

Over time, our beliefs and our attitudes about people, events, and ideas will become a set of norms, or accepted ideas, about what we may feel should happen in our society or what is right for the government to do in a situation. In this way, attitudes and beliefs form the foundation for opinions.

Measuring Public Opinion

Measuring Public Opinion

Most public opinion polls aim to be accurate, but this is not an easy task. Political polling is a science. From design to implementation, polls are complex and require careful planning and care. Donald Trump's triumph in the 2016 presidential elections is a recent example of the difficulty involved in measuring public opinion. Our history is littered with examples of polling companies producing results that incorrectly predicted public opinion due to poor survey design or bad polling methods.

In 1936, Literary Digest continued its tradition of polling citizens to determine who would win the presidential election. The magazine sent opinion cards to people who had a subscription, a phone, or a car registration. Only some of the recipients sent back their cards. The result? Alf Landon was predicted to win 55.4 percent of the popular vote; in the end, he received only 38 percent.[24]

Franklin D. Roosevelt won another term, but the story demonstrates the need to be scientific in conducting polls.

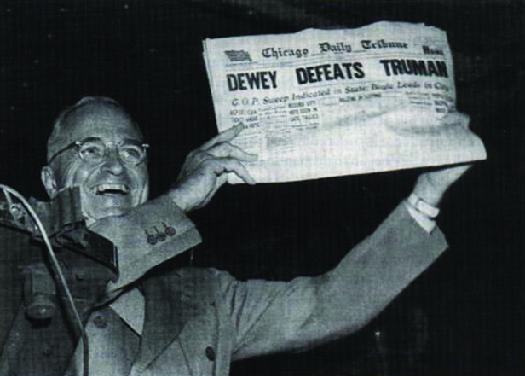

A few years later, Thomas Dewey lost the 1948 presidential election to Harry Truman, despite polls showing Dewey far ahead and Truman destined to lose. More recently, John Zogby, of Zogby Analytics, went public with his prediction that John Kerry would win the presidency against incumbent president George W. Bush in 2004, only to be proven wrong on election night. These are just a few cases, but each offers a different lesson. In 1948, pollsters did not poll up to the day of the election, relying on old numbers that did not include a late shift in voter opinion. Zogby’s polls did not represent likely voters and incorrectly predicted who would vote and for whom. These examples reinforce the need to use scientific methods when conducting polls, and to be cautious when reporting the results.

Most polling companies employ statisticians and methodologists trained in conducting polls and analyzing data. A number of criteria must be met if a poll is to be completed scientifically. First, the methodologists identify the desired population, or group, of respondents they want to interview. For example, if the goal is to project who will win the presidency, citizens from across the United States should be interviewed. If we wish to understand how voters in Texas will vote on a constitutional amendment, the population of respondents should only be Texas residents. When surveying on elections or policy matters, many polling houses will interview only respondents who have a history of voting in previous elections, because these voters are more likely to go to the polls on Election Day. Politicians are more likely to be influenced by the opinions of proven voters than of everyday citizens. Once the desired population has been identified, the researchers will begin to build a sample that is both random and representative.

A random sample consists of a limited number of people from the overall population, selected in such a way that each has an equal chance of being chosen. In the early years of polling, telephone numbers of potential respondents were arbitrarily selected from various areas to avoid regional bias. While landline phones allow polls to try to ensure randomness, the increasing use of cell phones makes this process difficult. Cell phones, and their numbers are portable and move with the owner. To prevent errors, polls that include known cellular numbers may screen for zip codes and other geographic indicators to prevent regional bias. A representative sample consists of a group whose demographic distribution is similar to that of the overall population. For example, nearly 51 percent of the U.S. population is female.[25]

To match this demographic distribution of women, any poll intended to measure what most Americans think about an issue should survey a sample containing slightly more women than men.

Pollsters try to interview a set number of citizens to create a reasonable sample of the population. This sample size will vary based on the size of the population being interviewed and the level of accuracy the pollster wishes to reach. If the poll is trying to reveal the opinion of a state or group, such as the opinion of Wisconsin voters about changes to the education system, the sample size may vary from five hundred to one thousand respondents and produce results with relatively low error. For a poll to predict what Americans think nationally, such as about the White House’s policy on greenhouse gases, the sample size should be larger.

The sample size varies with each organization and institution due to the way the data are processed. Gallup often interviews only five hundred respondents, while Rasmussen Reports and Pew Research often interview one thousand to fifteen hundred respondents.[26] Academic organizations, like the American National Election Studies, have interviews with over twenty-five-hundred respondents.[27]

A larger sample makes a poll more accurate because it will have relatively fewer unusual responses and be more representative of the actual population. Pollsters do not interview more respondents than necessary, however. Increasing the number of respondents will increase the accuracy of the poll, but once the poll has enough respondents to be representative, increases in accuracy become minor and are not cost-effective.[28]

When the sample represents the actual population, the poll’s accuracy will be reflected in a lower margin of error. The margin of error is a number that states how far the poll results may be from the actual opinion of the total population of citizens. The lower the margin of error, the more predictive the poll. Large margins of error are problematic. For example, if a poll that claims Hillary Clinton is likely to win 30 percent of the vote in the 2016 New York Democratic primary has a margin of error of +/-6, it tells us that Clinton may receive as little as 24 percent of the vote (30 – 6) or as much as 36 percent (30 + 6). A lower margin of error is clearly desirable because it gives us the most precise picture of what people actually think or will do.

With many polls out there, how do you know whether a poll is a good poll and accurately predicts what a group believes? First, look for the numbers. Polling companies include the margin of error, polling dates, number of respondents, and population sampled to show their scientific reliability. Was the poll recently taken? Is the question clear and unbiased? Was the number of respondents high enough to predict the population? Is the margin of error small? It is worth looking for this valuable information when you interpret poll results. While most polling agencies strive to create quality polls, other organizations want fast results and may prioritize immediate numbers over random and representative samples. For example, instant polling is often used by news networks to quickly assess how well candidates are performing in a debate.

Technology and Survey Research

Technology and Survey Research

The days of randomly walking neighborhoods and phone book cold-calling to interview random citizens are gone. Scientific polling has made interviewing more deliberate. Historically, many polls were conducted in person, yet this was expensive and yielded problematic results.

In some situations and countries, face-to-face interviewing still exists. Exit polls, focus groups, and some public opinion polls occur in which the interviewer and respondents communicate in person. Exit polls are conducted in person, with an interviewer standing near a polling location and requesting information as voters leave the polls. Focus groups often select random respondents from local shopping places or pre-select respondents from Internet or phone surveys. The respondents show up to observe or discuss topics and are then surveyed.

When organizations like Gallup or Roper decide to conduct face-to-face public opinion polls, however, it is a time-consuming and expensive process. The organization must randomly select households or polling locations within neighborhoods, making sure there is a representative household or location in each neighborhood.[29]

Then it must survey a representative number of neighborhoods from within a city. At a polling location, interviewers may have directions on how to randomly select voters of varied demographics. If the interviewer is looking to interview a person in a home, multiple attempts are made to reach a respondent if he or she does not answer. Gallup conducts face-to-face interviews in areas where less than 80 percent of the households in an area have phones because it gives a more representative sample.[30]

News networks use face-to-face techniques to conduct exit polls on Election Day.

Most polling now occurs over the phone or through the Internet. Some companies, like Harris Interactive, maintain directories that include registered voters, consumers, or previously interviewed respondents. If pollsters need to interview a particular population, such as political party members or retirees of a specific pension fund, the company may purchase or access a list of phone numbers for that group. Other organizations, like Gallup, use random-digit-dialing (RDD), in which a computer randomly generates phone numbers with desired area codes. Using RDD allows the pollsters to include respondents who may have unlisted and cellular numbers.[31]

Questions about ZIP code or demographics may be asked early in the poll to allow the pollsters to determine which interviews to continue and which to end early.

The interviewing process is also partly computerized. Many polls are now administered through computer-assisted telephone interviewing (CATI) or through robopolls. A CATI system calls random telephone numbers until it reaches a live person and then connects the potential respondent with a trained interviewer. As the respondent provides answers, the interviewer enters them directly into the computer program. These polls may have some errors if the interviewer enters an incorrect answer. The polls may also have reliability issues if the interviewer goes off the script or answers respondents’ questions.

Robo-polls are entirely computerized. A computer dials random or pre-programmed numbers and a prerecorded electronic voice administers the survey. The respondent listens to the question and possible answers and then presses numbers on the phone to enter responses. Proponents argue that respondents are more honest without an interviewer. However, these polls can suffer from error if the respondent does not use the correct keypad number to answer a question or misunderstands the question. Robo-polls may also have lower response rates because there is no live person to persuade the respondent to answer. There is also no way to prevent children from answering the survey. Lastly, the Telephone Consumer Protection Act (1991) made automated calls to cell phones illegal, which leaves a large population of potential respondents inaccessible to robopolls.[32]

The latest challenges in telephone polling come from the shift in phone usage. A growing number of citizens, especially younger citizens, use only cell phones, and their phone numbers are no longer based on geographic areas. The millennial generation (currently aged 18–33) is also more likely to text than to answer an unknown call, so it is harder to interview this demographic group. Polling companies now must reach out to potential respondents using email and social media to ensure they have a representative group of respondents.

Yet, the technology required to move to the Internet and handheld devices presents further problems. Web surveys must be designed to run on a varied number of browsers and handheld devices. Online polls cannot detect whether a person with multiple email accounts or social media profiles answers the same poll multiple times, nor can they tell when a respondent misrepresents demographics in the poll or on a social media profile used in a poll. These factors also make it more difficult to calculate response rates or achieve a representative sample. Yet, many companies are working with these difficulties, because it is necessary to reach younger demographics in order to provide accurate data.[33]

Problems in Survey Research

Problems in Survey Research

For a number of reasons, polls may not produce accurate results. Two important factors a polling company faces are timing and human nature. Unless you conduct an exit poll during an election and interviewers stand at the polling places on Election Day to ask voters how they voted, there is always the possibility the poll results will be wrong. The simplest reason is that if there is time between the poll and Election Day, a citizen might change his or her mind, lie, or choose not to vote at all. Timing is very important during elections because surprise events can shift enough opinions to change an election result. Of course, there are many other reasons why polls, even those not time-bound by elections or events, may be inaccurate.

Polls begin with a list of carefully written questions. The questions need to be free of framing, meaning they should not be worded to lead respondents to a particular answer. For example, take two questions about approval of the job the Texas Governor is doing. Question 1 might ask, “Given the number of school shootings, do you approve of the job Governor Abbott is doing?” Question 2 might ask, “Do you approve of the job Governor Abbott is doing?” Both questions want to know how respondents perceive the Governor’s success, but the first question sets up a frame for the respondent to believe school shootings are a problem before answering. This is likely to make the respondent’s answer more negative. Similarly, the way we refer to an issue or concept can affect the way listeners perceive it. The phrase “estate tax” did not rally voters to protest the inheritance tax, but the phrase “death tax” sparked debate about whether taxing estates imposed a double tax on income.[34]

Many polling companies try to avoid leading questions, which lead respondents to select a predetermined answer because they want to know what people really think. Some polls, however, have a different goal. Their questions are written to guarantee a specific outcome, perhaps to help a candidate get press coverage or gain momentum. These are called push polls. In the 2016 presidential primary race, MoveOn tried to encourage Senator Elizabeth Warren (D-MA) to enter the race for the Democratic nomination. Its poll used leading questions for what it termed an “informed ballot,” and, to show that Warren would do better than Hillary Clinton, it included ten positive statements about Warren before asking whether the respondent would vote for Clinton or Warren.[35]

The poll results were blasted by some in the media for being fake.

Sometimes lack of knowledge affects the results of a poll. Respondents may not know that much about the polling topic but are unwilling to say, “I don’t know.” For this reason, surveys may contain a quiz with questions that determine whether the respondent knows enough about the situation to answer survey questions accurately. A poll to discover whether citizens support changes to the Affordable Care Act or Medicaid might first ask who these programs serve and how they are funded. Polls about territory seizure by the Islamic State (or ISIS) or Russia’s aid to rebels in Ukraine may include a set of questions to determine whether the respondent reads or hears any international news. Respondents who cannot answer correctly may be excluded from the poll, or their answers may be separated from the others.

People may also feel social pressure to answer questions in accordance with the norms of their area or peers.[36]

If they are embarrassed to admit how they would vote, they may lie to the interviewer. In the 1982 governor’s race in California, Tom Bradley was far ahead in the polls, yet on Election Day he lost. This result was nicknamed the Bradley effect, on the theory that voters who answered the poll were afraid to admit they would not vote for a black man because it would appear politically incorrect and racist.

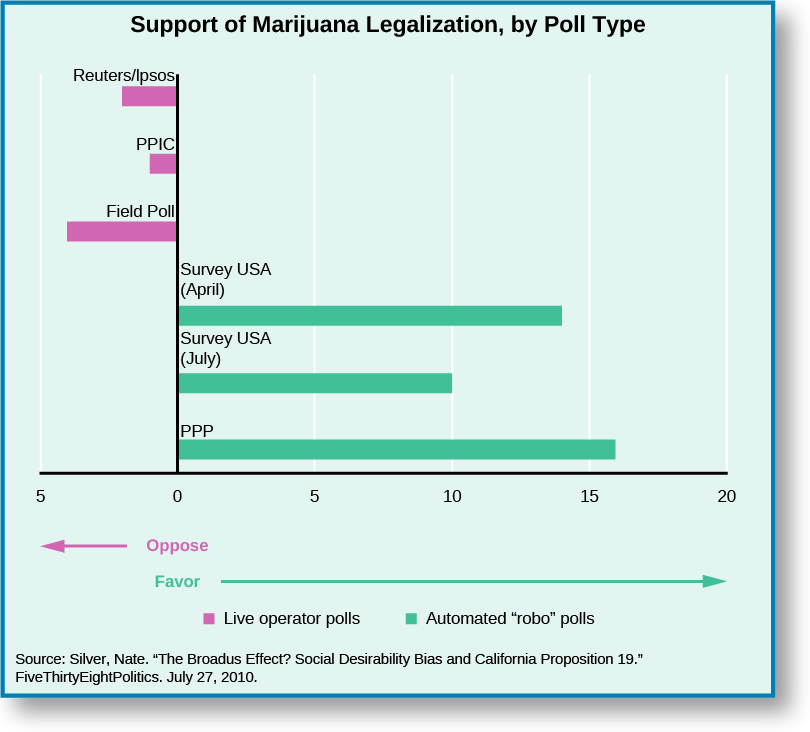

In 2010, Proposition 19, which would have legalized and taxed marijuana in California, met with a new version of the Bradley effect. Nate Silver, a political blogger, noticed that polls on the marijuana proposition were inconsistent, sometimes showing the proposition would pass and other times showing it would fail. Silver compared the polls and the way they were administered because some polling companies used an interviewer and some used robocalling. He then proposed that voters speaking with a live interviewer gave the socially acceptable answer that they would vote against Proposition 19, while voters interviewed by a computer felt free to be honest.[37]

While this theory has not been proven, it is consistent with other findings that interviewer demographics can affect respondents’ answers. African Americans, for example, may give different responses to interviewers who are white than to interviewers who are black.[38]

Push Polls

Push Polls

One of the newer byproducts of polling is the creation of push polls, which consist of political campaign information presented as polls. A respondent is called and asked a series of questions about his or her position or candidate selections. If the respondent’s answers are for the wrong candidate, the next questions will give negative information about the candidate in an effort to change the voter’s mind.

In 2014, a fracking ban was placed on the ballot in a town in Texas. Fracking, which includes injecting pressurized water into drilled wells, helps energy companies collect additional gas from the earth. It is controversial, with opponents arguing it causes water pollution, sound pollution, and earthquakes. During the campaign, a number of local voters received a call that polled them on how they planned to vote on the proposed fracking ban.[39]

If the respondent was unsure about or planned to vote for the ban, the questions shifted to provide negative information about the organizations proposing the ban. One question asked, “If you knew the following, would it change your vote . . . two Texas railroad commissioners, the state agency that oversees oil and gas in Texas, have raised concerns about Russia’s involvement in the anti-fracking efforts in the U.S.?” The question played upon voter fears about Russia and international instability in order to convince them to vote against the fracking ban.

These techniques are not limited to issue votes; candidates have used them to attack their opponents. The hope is that voters will think the poll is legitimate and believe the negative information provided by a “neutral” source.

Public Opinion and Elections

Public Opinion and Elections

Elections are the events on which opinion polls have the greatest measured effect. Public opinion polls do more than show how we feel on issues or project who might win an election. The media use public opinion polls to decide which candidates are ahead of the others and therefore of interest to voters and worthy of an interview. From the moment President Obama was inaugurated for his second term, speculation began about who would run in the 2016 presidential election. Within a year, potential candidates were being ranked and compared by a number of newspapers.[40]

The speculation included favorability polls on Hillary Clinton, which measured how positively voters felt about her as a candidate. The media deemed these polls important because they showed Clinton as the frontrunner for the Democrats in the next election.[41]

During the presidential primary season, we see examples of the bandwagon effect, in which the media pays more attention to candidates who poll well during the fall and the first few primaries. Bill Clinton was nicknamed the “Comeback Kid” in 1992 after he placed second in the New Hampshire primary despite accusations of adultery with Gennifer Flowers. The media’s attention on Clinton gave him the momentum to make it through the rest of the primary season, ultimately winning the Democratic nomination and the presidency.

Polling is also at the heart of horserace coverage, in which, just like an announcer at the racetrack, the media calls out every candidate’s move throughout the presidential campaign. Horserace coverage can be neutral, positive, or negative, depending upon what polls or facts are covered. During the 2012 presidential election, the Pew Research Center found that both Mitt Romney and President Obama received more negative than positive horserace coverage, with Romney’s growing more negative as he fell in the polls.[42]

Horserace coverage is often criticized for its lack of depth; the stories skip over the candidates’ issue positions, voting histories, and other facts that would help voters make an informed decision. Yet, horserace coverage is popular because the public is always interested in who will win, and it often makes up a third or more of news stories about the election.[43]

Exit polls, taken the day of the election, are the last election polls conducted by the media. Announced results of these surveys can deter voters from going to the polls if they believe the election has already been decided.

Public opinion polls also affect how much money candidates receive in campaign donations. Donors assume public opinion polls are accurate enough to determine who the top two to three primary candidates will be, and they give money to those who do well. Candidates who poll at the bottom will have a hard time collecting donations, increasing the odds that they will continue to do poorly. This was apparent in the run-up to the 2016 presidential election. Bernie Sanders, Hillary Clinton, and Martin O’Malley each campaigned in the hope of becoming the Democratic presidential nominee. In June 2015, 75 percent of Democrats likely to vote in their state primaries said they would vote for Clinton, while 15 percent of those polled said they would vote for Sanders. Only 2 percent said they would vote for O’Malley.[44]

During this same period, Clinton raised $47 million in campaign donations, Sanders raised $15 million, and O’Malley raised $2 million.[45]

By September 2015, 23 percent of likely Democratic voters said they would vote for Sanders,[46] and his summer fundraising total increased accordingly.[47]

Presidents running for reelection also must perform well in public opinion polls, and being in office may not provide an automatic advantage. Americans often think about both the future and the past when they decide which candidate to support.[48]

They have three years of past information about the sitting president, so they can better predict what will happen if the incumbent is reelected. That makes it difficult for the president to mislead the electorate. Voters also want a future that is prosperous. Not only should the economy look good, but citizens want to know they will do well in that economy.[49]

For this reason, daily public approval polls sometimes act as both a referendum of the president and a predictor of success.

Survey Research in Texas

Survey Research in Texas

Most polling is conducted at the national level–there are far fewer polls conducted at the state level. In Texas, we’re fortunate to have the University of Texas/Texas Tribune Poll.

Beginning in 2008, the Texas Politics Project at the University of Texas (UT), under the direction of James Henson and Joshua Blank, has conducted three to four statewide public opinion polls each year to assess the opinions of registered voters on upcoming elections, public policy, and attitudes towards politics, politicians, and government.

In 2009, UT partnered with the Texas Tribune and continued to regularly measure public opinion in Texas, making the data freely available to students, researchers, and the general public in our data archive. To see what Texans are thinking about politics, or to read some of our own analysis, please visit their Polling Page where you’ll find a wealth of information on public opinion in Texas.

Notes

Notes

CC LICENSED CONTENT, ORIGINAL

Revision and Adaptation. Authored by: Daniel M. Regalado. License: CC BY: Attribution

- Gallup. 2015. “Gallup Daily: Obama Job Approval.” Gallup. June 6, 2015. http://www.gallup.com/poll/113980/Gallup-Daily-Obama-Job-Approval.aspx (February 17, 2016); Rasmussen Reports. 2015. “Daily Presidential Tracking Poll.” Rasmussen Reports June 6, 2015. http://www.rasmussenreports.com/public_content/politics/obama_administration/daily_presidential_tracking_poll(February 17, 2016); Roper Center. 2015. “Obama Presidential Approval.” Roper Center. June 6, 2015. http://www.ropercenter.uconn.edu/polls/presidential-approval/ (February 17, 2016). ↵

- V. O. Key, Jr. 1966. The Responsible Electorate. Harvard University: Belknap Press. ↵

- John Zaller. 1992. The Nature and Origins of Mass Opinion. Cambridge: Cambridge University Press. ↵

- Eitan Hersh. 2013. “Long-Term Effect of September 11 on the Political Behavior of Victims’ Families and Neighbors.” Proceedings of the National Academy of Sciences of the United States of America 110 (52): 20959–63. ↵

- M. Kent Jennings. 2002. “Generation Units and the Student Protest Movement in the United States: An Intra- and Intergenerational Analysis.” Political Psychology 23 (2): 303–324. ↵

- United States Senate. 2015. “Party Division in the Senate, 1789-Present,” United States Senate. June 5, 2015. http://www.senate.gov/pagelayout/history/one_item_and_teasers/partydiv.htm (February 17, 2016). History, Art & Archives. 2015. “Party Divisions of the House of Representatives: 1789–Present.” United States House of Representatives. June 5, 2015. http://history.house.gov/Institution/Party-Divisions/Party-Divisions/ (February 17, 2016). ↵

- V. O. Key Jr. 1955. “A Theory of Critical Elections.” Journal of Politics 17 (1): 3–18. ↵

- Pew Research Center. 2014. “Political Polarization in the American Public.” Pew Research Center. June 12, 2014. http://www.people-press.org/2014/06/12/political-polarization-in-the-american-public/ (February 17, 2016). ↵

- Pew Research Center. 2015. “American Values Survey.” Pew Research Center. http://www.people-press.org/values-questions/(February 17, 2016). ↵

- Virginia Chanley. 2002. “Trust in Government in the Aftermath of 9/11: Determinants and Consequences.” Political Psychology 23 (3): 469–483. ↵

- Deborah Schildkraut. 2002. “The More Things Change… American Identity and Mass and Elite Responses to 9/11.” Political Psychology 23 (3): 532. ↵

- Joseph Bafumi and Robert Shapiro. 2009. “A New Partisan Voter.” The Journal of Politics 71 (1): 1–24. ↵

- Liz Marlantes, “After 9/11, the Body Politic Tilts to Conservatism,” Christian Science Monitor, 16 January 2002. ↵

- Liping Weng. 2010. “Shanghai Children’s Value Socialization and Its Change: A Comparative Analysis of Primary School Textbooks.” China Media Research 6 (3): 36–43. ↵

- David Easton. 1965. A Systems Analysis of Political Life. New York: John Wiley. ↵

- Angus Campbell, Philip Converse, Warren Miller, and Donald Stokes. 2008. The American Voter: Unabridged Edition. Chicago: University of Chicago Press. Michael S. Lewis-Beck, William G. Jacoby, Helmut Norpoth, and Herbert F. Weisberg. 2008. American Vote Revisited. Ann Arbor: University of Michigan Press. ↵

- Russell Dalton. 1980. “Reassessing Parental Socialization: Indicator Unreliability versus Generational Transfer.” American Political Science Review 74 (2): 421–431. ↵

- Michael S. Lewis-Beck, William G. Jacoby, Helmut Norpoth, and Herbert F. Weisberg. 2008. American Vote Revisited. Ann Arbor: University of Michigan Press. ↵

- Michael Lipka. 2013. “What Surveys Say about Workshop Attendance—and Why Some Stay Home.” Pew Research Center. September 13, 2013. http://www.pewresearch.org/fact-tank/2013/09/13/what-surveys-say-about-worship-attendance-and-why-some-stay-home/ (February 17, 2016). ↵

- Arthur Lupia and Mathew D. McCubbins. 1998. The Democratic Dilemma: Can Citizens Learn What They Need to Know? New York: Cambridge University Press. John Barry Ryan. 2011. “Social Networks as a Shortcut to Correct Voting.” American Journal of Political Science 55 (4): 753–766. ↵

- Sarah Bowen. 2015. “A Framing Analysis of Media Coverage of the Rodney King Incident and Ferguson, Missouri, Conflicts.” Elon Journal of Undergraduate Research in Communications 6 (1): 114–124. ↵

- Frederick Engels. 1847. The Principles of Communism. Trans. Paul Sweezy. https://www.marxists.org/archive/marx/works/1847/11/prin-com.htm (February 17, 2016). ↵

- Libertarian Party. 2014. “Libertarian Party Platform.” June. http://www.lp.org/platform (February 17, 2016). ↵

- Arthur Evans, “Predict Landon Electoral Vote to be 315 to 350,” Chicago Tribune, 18 October 1936. ↵

- United States Census Bureau. 2012. “Age and Sex Composition in the United States: 2012.” United States Census Bureau. http://www.census.gov/population/age/data/2012comp.html (February 17, 2016). ↵

- Rasmussen Reports. 2015. “Daily Presidential Tracking Poll.” Rasmussen Reports. September 27, 2015. http://www.rasmussenreports.com/public_content/politics/obama_administration/daily_presidential_tracking_poll (February 17, 2016); Pew Research Center. 2015. “Sampling.” Pew Research Center. http://www.pewresearch.org/methodology/u-s-survey-research/sampling/(February 17, 2016). ↵

- American National Election Studies Data Center. 2016. http://electionstudies.org/studypages/download/datacenter_all_NoData.php (February 17, 2016). ↵

- Michael W. Link and Robert W. Oldendick. 1997. “Good” Polls / “Bad” Polls—How Can You Tell? Ten Tips for Consumers of Survey Research.” South Carolina Policy Forum. http://www.ipspr.sc.edu/publication/Link.htm (February 17, 2016); Pew Research Center. 2015. “Sampling.” Pew Research Center. http://www.pewresearch.org/methodology/u-s-survey-research/sampling/ (February 17, 2016). ↵

- “Roper Center. 2015. “Polling Fundamentals – Sampling.” Roper. http://www.ropercenter.uconn.edu/support/polling-fundamentals-sampling/ (February 17, 2016). ↵

- Gallup. 2015. “How Does the Gallup World Poll Work?” Gallup. http://www.gallup.com/178667/gallup-world-poll-work.aspx (February 17, 2016). ↵

- Gallup. 2015. “Does Gallup Call Cellphones?” Gallup. http://www.gallup.com/poll/110383/does-gallup-call-cell-phones.aspx(February 17, 2016). ↵

- Mark Blumenthal, “The Case for Robo-Pollsters: Automated Interviewers Have Their Drawbacks, But Fewer Than Their Critics Suggest,” National Journal, 14 September 2009. ↵

- Mark Blumenthal, “Is Polling As We Know It Doomed?” National Journal, 10 August 2009. ↵

- Frank Luntz. 2007. Words That Work: It’s Not What You Say, It’s What People Hear. New York: Hyperion. ↵

- Aaron Blake, “This terrible polls shows Elizabeth Warren beating Hillary Clinton,” Washington Post, 11 February 2015. ↵

- Nate Silver. 2010. “The Broadus Effect? Social Desirability Bias and California Proposition 19.” FiveThirtyEightPolitics. July 27, 2010. http://fivethirtyeight.com/features/broadus-effect-social-desirability-bias/ (February 18, 2016). ↵

- Nate Silver. 2010. “The Broadus Effect? Social Desirability Bias and California Proposition 19.” FiveThirtyEightPolitics. July 27, 2010. http://fivethirtyeight.com/features/broadus-effect-social-desirability-bias/ (February 18, 2016). ↵

- D. Davis. 1997. “The Direction of Race of Interviewer Effects among African-Americans: Donning the Black Mask.” American Journal of Political Science 41 (1): 309–322. ↵

- Kate Sheppard, “Top Texas Regulator: Could Russia be Behind City’s Proposed Fracking Ban?” Huffington Post, 16 July 2014. http://www.huffingtonpost.com/2014/07/16/fracking-ban-denton-russia_n_5592661.html (February 18, 2016). ↵

- Paul Hitlin. 2013. “The 2016 Presidential Media Primary Is Off to a Fast Start.” Pew Research Center. October 3, 2013. http://www.pewresearch.org/fact-tank/2013/10/03/the-2016-presidential-media-primary-is-off-to-a-fast-start/ (February 18, 2016). ↵

- Pew Research Center, 2015. “Hillary Clinton’s Favorability Ratings over Her Career.” Pew Research Center. June 6, 2015. http://www.pewresearch.org/wp-content/themes/pewresearch/static/hillary-clintons-favorability-ratings-over-her-career/(February 18, 2016). ↵

- Pew Research Center. 2012. “Winning the Media Campaign.” Pew Research Center. November 2, 2012. http://www.journalism.org/2012/11/02/winning-media-campaign-2012/ (February 18, 2016). ↵

- Pew Research Center. 2012. “Fewer Horserace Stories-and Fewer Positive Obama Stories-Than in 2008.” Pew Research Center. November 2, 2012. http://www.journalism.org/2012/11/01/press-release-6/ (February 18, 2016). ↵

- Patrick O’Connor. 2015. “WSJ/NBC Poll Finds Hillary Clinton in a Strong Position.” Wall Street Journal. June 23, 2015. http://www.wsj.com/articles/new-poll-finds-hillary-clinton-tops-gop-presidential-rivals-1435012049. ↵

- Federal Elections Commission. 2015. “Presidential Receipts.” http://www.fec.gov/press/summaries/2016/tables/presidential/presreceipts_2015_q2.pdf (February 18, 2016). ↵

- Susan Page and Paulina Firozi, “Poll: Hillary Clinton Still Leads Sanders and Biden But By Less,” USA Today, 1 October 2015. ↵

- Dan Merica, and Jeff Zeleny. 2015. “Bernie Sanders Nearly Outraises Clinton, Each Post More Than $20 Million.” CNN. October 1, 2015. http://www.cnn.com/2015/09/30/politics/bernie-sanders-hillary-clinton-fundraising/index.html?eref=rss_politics (February 18, 2016). ↵

- Robert S. Erikson, Michael B. MacKuen, and James A. Stimson. 2000. “Bankers or Peasants Revisited: Economic Expectations and Presidential Approval.” Electoral Studies 19: 295–312. ↵

- Erikson et al, “Bankers or Peasants Revisited: Economic Expectations and Presidential Approval. ↵

- Michael B. MacKuen, Robert S. Erikson, and James A. Stimson. 1989. “Macropartisanship.” American Political Science Review 83 (4): 1125–1142. ↵

- James A. Stimson, Michael B. Mackuen, and Robert S. Erikson. 1995. “Dynamic Representation.” American Political Science Review 89 (3): 543–565. ↵

- Stimson et al, “Dynamic Representation.” ↵

- Stimson et al, “Dynamic Representation.” ↵

- Dan Wood. 2009. Myth of Presidential Representation. New York: Cambridge University Press, 96-97. ↵

- Wood, Myth of Presidential Representation. ↵

- U.S. Election Atlas. 2015. “United States Presidential Election Results.” U.S. Election Atlas. June 22, 2015. http://uselectionatlas.org/RESULTS/ (February 18, 2016). ↵

- Richard Fleisher, and Jon R. Bond. 1996. “The President in a More Partisan Legislative Arena.” Political Research Quarterly 49 no. 4 (1996): 729–748. ↵

- George C. Edwards III, and B. Dan Wood. 1999. “Who Influences Whom? The President, Congress, and the Media.” American Political Science Review 93 (2): 327–344. ↵

- Pew Research Center. 2013. “Public Opinion Runs Against Syrian Airstrikes.” Pew Research Center. September 4, 2013. http://www.people-press.org/2013/09/03/public-opinion-runs-against-syrian-airstrikes/ (February 18, 2016). ↵

- Paul Bedard. 2013. “Poll-Crazed Clinton Even Polled on His Dog’s Name.” Washington Examiner. April 30, 2013. http://www.washingtonexaminer.com/poll-crazed-bill-clinton-even-polled-on-his-dogs-name/article/2528486. ↵

- Stimson et al, “Dynamic Representation.” ↵

- Suzanna De Boef, and James A. Stimson. 1995. “The Dynamic Structure of Congressional Elections.” Journal of Politics 57 (3): 630–648. ↵

- Stimson et al, “Dynamic Representation.” ↵

- Stimson et al, “Dynamic Representation.” ↵

- Benjamin Cardozo. 1921. The Nature of the Judicial Process. New Haven: Yale University Press. ↵

- Jack Knight, and Lee Epstein. 1998. The Choices Justices Make. Washington DC: CQ Press. ↵

- Kevin T. Mcguire, Georg Vanberg, Charles E Smith, and Gregory A. Caldeira. 2009. “Measuring Policy Content on the U.S. Supreme Court.” Journal of Politics 71 (4): 1305–1321. ↵

- Kevin T. McGuire, and James A. Stimson. 2004. “The Least Dangerous Branch Revisited: New Evidence on Supreme Court Responsiveness to Public Preferences.” Journal of Politics 66 (4): 1018–1035. ↵

- Thomas Marshall. 1989. Public Opinion and the Supreme Court. Boston: Unwin Hyman. ↵

- Christopher J. Casillas, Peter K. Enns, and Patrick C. Wohlfarth. 2011. “How Public Opinion Constrains the U.S. Supreme Court.” American Journal of Political Science 55 (1): 74–88. ↵

- Town of Greece v. Galloway 572 U.S. ___ (2014). ↵

- “Religion.” Gallup. June 18, 2015. http://www.gallup.com/poll/1690/Religion.aspx (February 18, 2016). ↵

- Rebecca Riffkin. 2015. “In U.S., Support for Daily Prayer in Schools Dips Slightly.” Gallup. September 25, 2015. http://www.gallup.com/poll/177401/support-daily-prayer-schools-dips-slightly.aspx. ↵

- Gallup. 2015. “Supreme Court.” Gallup. http://www.gallup.com/poll/4732/supreme-court.aspx (February 18, 2016). ↵

- Stimson et al, “Dynamic Representation.” ↵